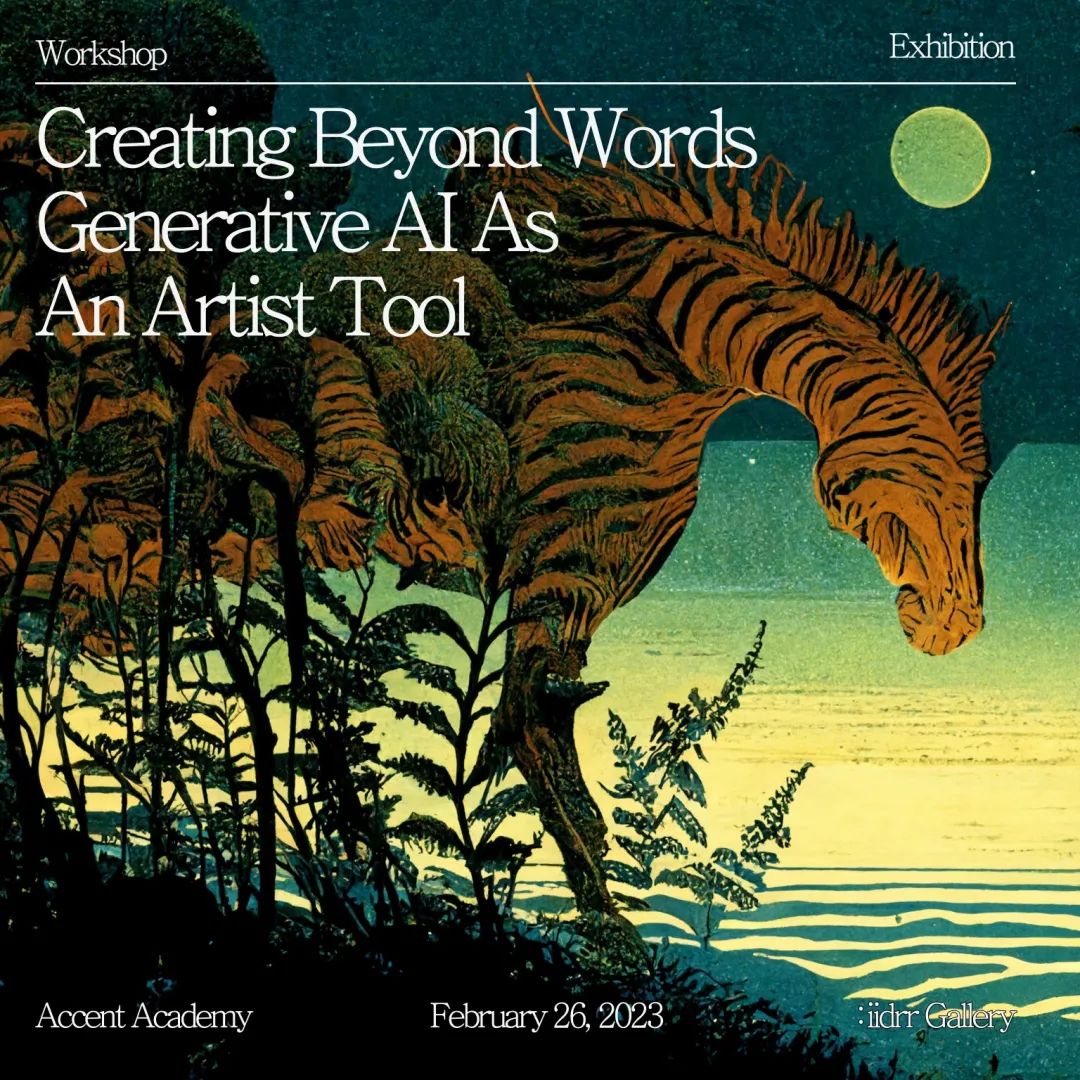

Creating Beyond Words - Stable Diffusion as an Artist Tool

2023/2/26

3:30-6:30 PM

Over the past year, the unprecedented advancement in text-based generative AI models has sparked widespread attention, discussion, and mainstream adoption of these innovative co-creative interfaces, which has resulted in novelty, excitement, and curiosity, as well as concern, anger, and insult.

Alongside this, the booming open-sourced text-to-image model development contributes to expanding access to working with AI tools beyond experts, tech giants, and professional technologists. Among all these open-sourced treasures, the recently-released Stable Diffusion text-to-image model by stability.ai outperforms for its fast training speed, highly customizable settings, and impressive generating quality.

In this workshop led by new media artist Tong Wu, we will delve into the history and capabilities of text-based generative AI tools with a focus on the what-about and how-to of the Stable Diffusion model. Together we will look at the potential of AI tools like Stable Diffusion in exploring new modes of content creation and helping us re-examine our language pattern. We’ll also discuss how such tools could revolutionize the workflows of poets, writers, and artists, and what are the caveats and things we should look out for when we’re creating with these AI models considering the ways in which AI is emerging as a new, socially-engaged creative agent.